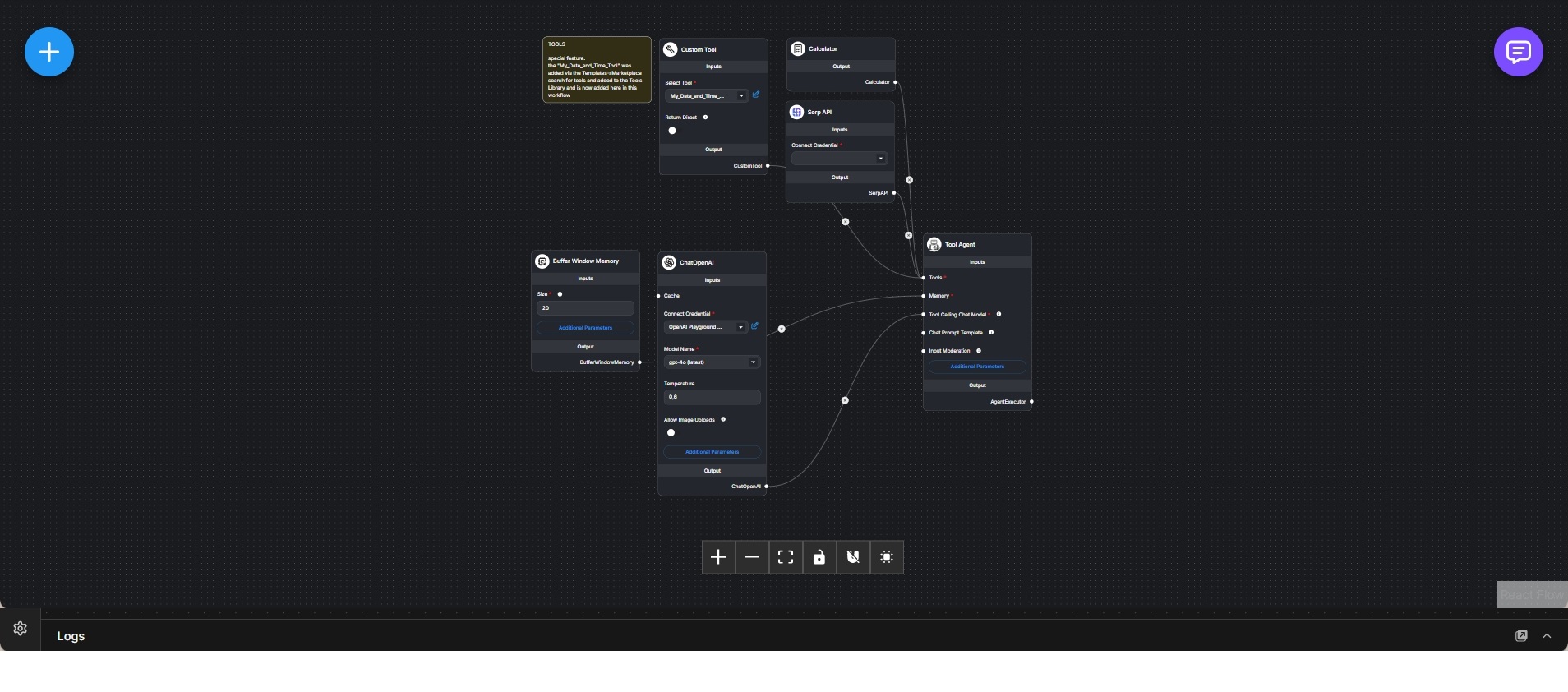

Cloud-Based Research Tool Agent with OpenAI, Memory, and External Utilities

Tool-enabled research agent that combines OpenAI language model reasoning with calculators, search APIs, and custom tools for grounded analysis.

This workflow implements a cloud-based research agent that augments language model reasoning with deterministic tools and external data sources. It follows the same architectural pattern as the local Ollama variant, but uses an OpenAI-hosted chat model as the reasoning engine.

The ChatOpenAI node provides access to a high-capability cloud model, optimized for reliable tool invocation and complex reasoning. Model parameters are tuned to balance analytical precision with flexible interpretation of user intent.

A buffer window memory component maintains short-term conversational context, enabling multi-step research workflows where intermediate findings and tool outputs influence subsequent reasoning steps.

The agent is configured as a Tool Agent with access to a controlled set of tools. These include a calculator for precise arithmetic, a SERP API for real-time web search, and a custom tool that exposes additional logic such as date and time handling. Tools are explicitly registered and only invoked when the agent determines they are necessary.

During execution, the agent decides when to call external tools, incorporates their outputs into the reasoning context, and synthesizes grounded responses based on verified data rather than assumptions. This results in answers that are both context-aware and externally validated.

This workflow demonstrates how cloud-based language models can be used as orchestration layers rather than sources of truth, delegating factual retrieval and computation to specialized tools. It is well suited for research assistants, analytical workflows, and production scenarios where accuracy, scalability, and access to up-to-date information are critical.